Implementing AI in Academic Medicine

A guide to governance and principled practice

Preface

I started writing this framework in 2023, when the dominant institutional question at most academic medical centers was still “should we let people use ChatGPT?” That question has been overtaken by events. The question now is how to govern AI programs that are already real, already large, and already affecting patients, trainees, researchers, and staff in ways that most institutions do not yet fully see.

This book grew out of a working document — an attempt to organize the range of AI-related decisions an AMC has to make into something coherent enough to act on. It is not a strategic plan, and it is not meant to be prescriptive. Every institution has different constraints, different governance history, and different places where the technology has already landed. What I hope to offer is a framework flexible enough to be useful across those differences.

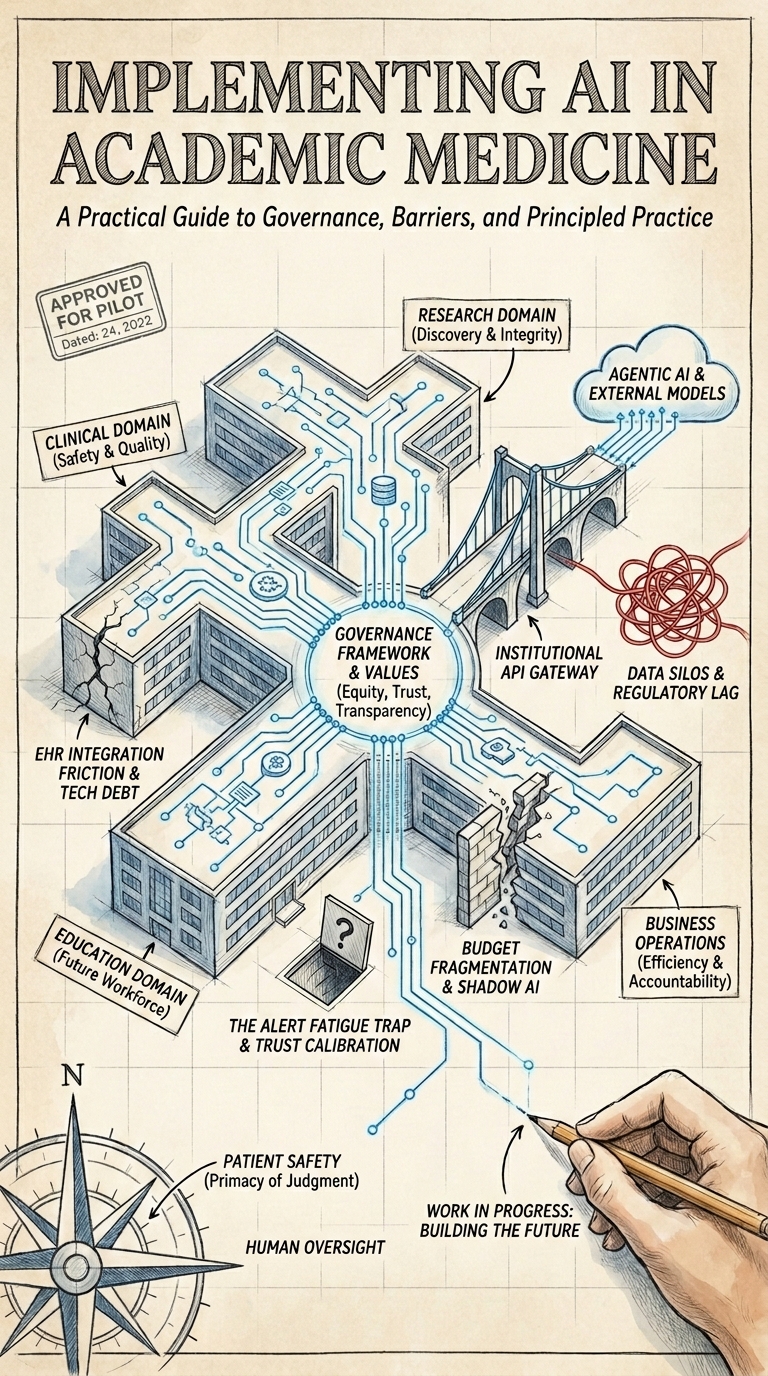

The central structural insight, and the one I keep returning to, is that an AMC is not a single AI deployment environment. It is four. Clinical care, research, education, and business operations each have different risk profiles, different regulatory obligations, different definitions of success, and different leadership structures. A governance program that treats them as a single domain will either be so restrictive that the research and education communities route around it, or so permissive that clinical AI gets deployed without the oversight patient safety requires. The domain structure in this framework is an attempt to hold that complexity without collapsing it.

Across all four domains, the same five operational questions recur. Who can access what data and under what conditions? What technical infrastructure and security controls are required? What are the ethical and regulatory obligations? How does the institution build and sustain the workforce capacity to use AI responsibly? And who manages the program across its full lifecycle, from the first intake request to eventual decommissioning? These are the workstreams — the horizontal dimension of the framework — and they are where most of the practical governance work actually lives.

The chapters that have grown up around this framework are substantially more detailed than the working document I started with, and they reflect a lot of thinking from peer institutions, published governance frameworks, and the accumulating evidence on what makes clinical AI deployments succeed and fail. I have tried to be honest about what is well-established and what is still contested, and to flag where the regulatory landscape is still moving.

This is a living document. The regulatory environment described in the governance chapter will continue to change. The AI tools described in the domain chapters will be superseded. The governance programs at peer institutions will evolve. The version you are reading is as current as it was when it was last revised — the date at the top of each chapter is your guide to how much that should concern you.

It is also, itself, a product of AI collaboration. The research, drafting, and ongoing maintenance of this book are conducted with the assistance of large language models — primarily Claude and Gemini — working under human direction and review. The workflow appendix (Appendix D — How This Book Was Written: A Multi-Model AI Authorship Workflow) describes that process in detail, including where the AI contributes most and where human judgment remains the necessary check.

This is a living document. Scope and content can and will continue to change and evolve. All the material is open for comment. Contributions are welcome via the github repo. Reuse and sharing are encouraged.